Higgs over easy

12 October 2016 | By

Nothing beats a good hot cup of coffee at Mel’s Drive-In. Add a couple of eggs, toast, hash browns, and we are in for a nice slow lazy morning in San Francisco. Well maybe not too lazy. The plenary session starts in half an hour. Still, plenty of time to enjoy a traditional American bottomless cup of brew.

My colleagues and I are in town to attend the 22nd International Conference on Computing in High Energy and Nuclear Physics (CHEP 2016, for short). I like to think of us as the nerds of the nerds. Computing, networking, software, middleware, bandwidth, and processors are the topics of discussion, and there is indeed much to talk about.

Frankly, we have no idea how we are going to achieve the level of computing needed to handle the data rates of the High-Luminosity Large Hadron Collider (HL-LHC), expected to start up in the early 2020’s. That’s not far away and the amount of data to process will be up to 100 times the current rate. Yes, that's 100 times a rate that is already producing thousands of terabytes of data each year.

Let's imagine Mel's Drive-in facing this same problem. During the 15 minutes it took me to gulp down my perfectly cooked eggs and generous serving of hash browns, around 30-40 people came through Mel’s. That’s a throughput of about 2 people / minute. Now, imagine the challenge if 200 people started coming up to the “Please wait to be seated” sign every minute. The line would go out the door and around the block. Even worse, I doubt the waitress would be back every 5 minutes to “heat up” my coffee. And that would be a disaster.

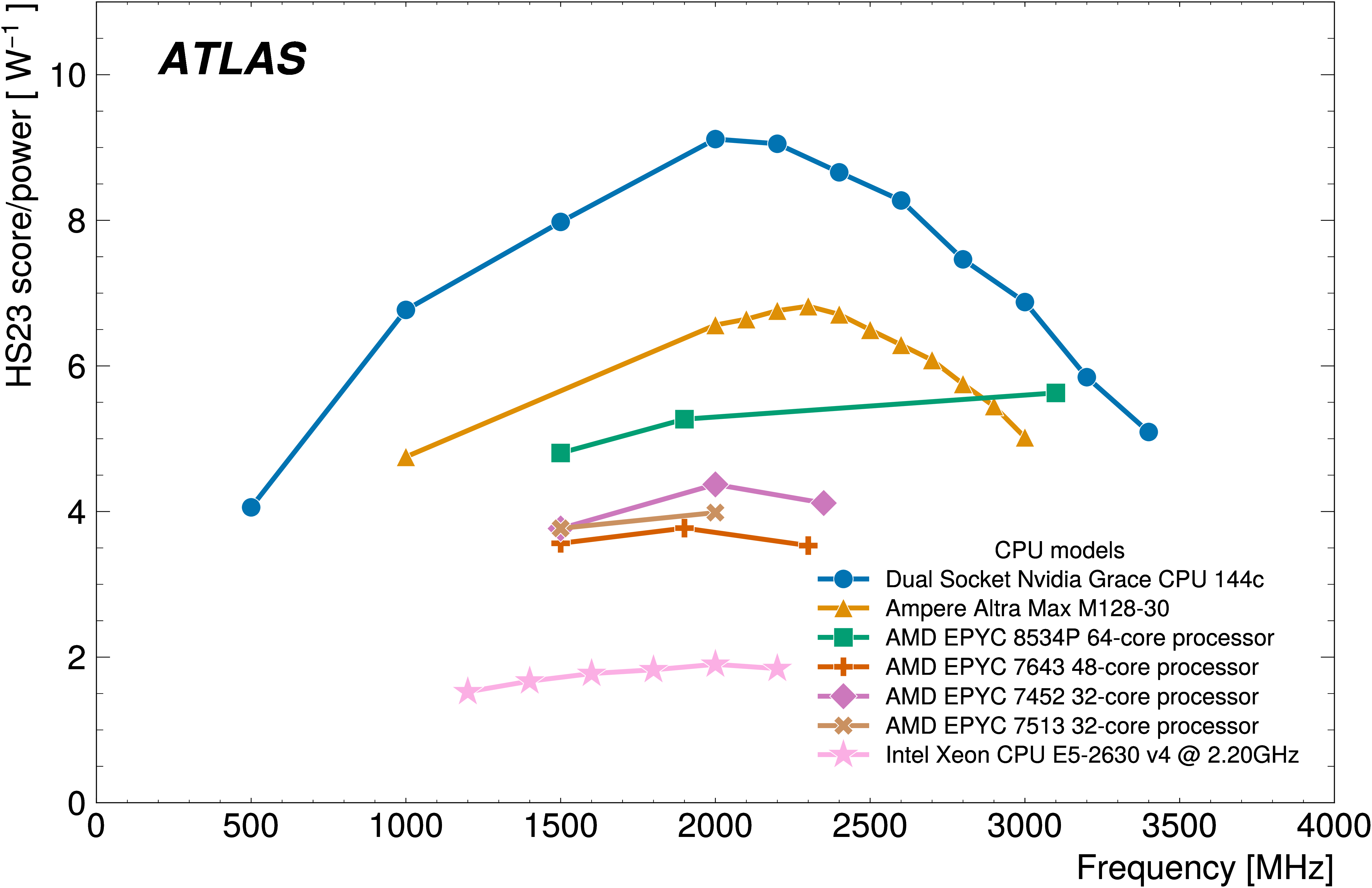

Panic? Never. We are scientists. When we have problems, we solve them. It is in our training. And that is what is going on now at the conference. The reason for our uncertainty does not come from lack of expertise. Rather, nobody can accurately predict how quickly, or in which direction, the technology will change in the coming years. How much faster will the chips be? How many cores? How much energy will be needed? Will Moore's Law hold? But, we can attack the known issues: improved programming, better usage of multicore technology, faster networking, and more effective storage.

Uncertainties in technology are not new to this community. Nobody knew the word “petabyte” when we started planning the LHC in the 1980’s.

Uncertainties in technology are not new to this community. Nobody knew the word “petabyte” when we started planning the LHC in the 1980’s. The word gigabyte was brand new, describing only the largest of the mass storage devices of the most sophisticated computers. Yet, we knew we would have to process that amount of data if we had any hope of finding the Higgs boson, a particle that turns up in ATLAS only once in every trillion (1,000,000,000,000) proton-proton collisions delivered by the LHC.

Our goal now is to detect and measure millions of Higgs bosons in the next decade. Doing so is perhaps our best chance to solve the puzzle of dark matter and other profound questions that go beyond the Standard Model, our current understanding of the universe. This will require major upgrades to our detector technology, faster electronics, better analysis methods, and – at the heart of it all – faster, more efficient computing.

Nowadays, anyone can go down to the local computing store to buy a petabyte of data storage. That’s only a few hundred external backup drives, after all. And, I imagine, in another couple decades, the problems of the HL-LHC will also seem trivial. But it will only be because fundamental research pushed the requirements of our technology. And because those nerds in the conference next door relentlessly pursued the necessary solutions.

As far as Mel’s goes, let’s hope the data rate stays the same. The servers are great the way they are, and I think they work at just the right speed. And, to be honest, I don’t think my nerves could handle much more coffee. Sure tastes good, though…