The Higgs boson: the hunt, the discovery, the study and some future perspectives

4 July 2018 | By

The origins of the Higgs boson

Many questions in particle physics are related to the existence of particle mass. The “Higgs mechanism,” which consists of the Higgs field and its corresponding Higgs boson, is said to give mass to elementary particles. By “mass” we mean the inertial mass, which resists when we try to accelerate an object, rather than the gravitational mass, which is sensitive to gravity. In Einstein’s celebrated formula E = mc2, the “m” is the inertial mass of the particle. In a sense, this mass is the essential quantity, which defines that at this place there is a particle rather than nothing.

In the early 1960s, physicists had a powerful theory of electromagnetic interactions and a descriptive model of the weak nuclear interaction – the force that is at play in many radioactive decays and in the reactions which make the Sun shine. They had identified deep similarities between the structure of these two interactions, but a unified theory at the deeper level seemed to require that particles be massless even though real particles in nature have mass.

In 1964, theorists proposed a solution to this puzzle. Independent efforts by Robert Brout and François Englert in Brussels, Peter Higgs at the University of Edinburgh, and others lead to a concrete model known as the Brout-Englert-Higgs (BEH) mechanism. The peculiarity of this mechanism is that it can give mass to elementary particles while retaining the nice structure of their original interactions. Importantly, this structure ensures that the theory remains predictive at very high energy. Particles that carry the weak interaction would acquire masses through their interaction with the Higgs field, as would all matter particles. The photon, which carries the electromagnetic interaction, would remain massless.

In the history of the universe, particles interacted with the Higgs field just 10-12 seconds after the Big Bang. Before this phase transition, all particles were massless and travelled at the speed of light. After the universe expanded and cooled, particles interacted with the Higgs field and this interaction gave them mass. The BEH mechanism implies that the values of the elementary particle masses are linked to how strongly each particle couples to the Higgs field. These values are not predicted by current theories. However, once the mass of a particle is measured, its interaction with the Higgs boson can be determined.

The BEH mechanism had several implications: first, that the weak interaction was mediated by heavy particles, namely the W and Z bosons, which were discovered at CERN in 1983. Second, the new field itself would materialize in another particle. The mass of this particle was unknown, but researchers knew it should be lower than 1 TeV – a value well beyond the then conceivable limits of accelerators. This particle was later called the Higgs boson and would become the most sought-after particle in all of particle physics.

The Higgs boson would become the most sought-after particle in all of particle physics.

The accelerator, the experiments and the Higgs

The Large Electron-Positron collider (LEP), which operated at CERN from 1989 to 2000, was the first accelerator to have significant reach into the potential mass range of the Higgs boson. Though LEP did not find the Higgs boson, it made significant headway in the search, determining that the mass should be larger than 114 GeV.

In 1984, a few physicists and engineers at CERN were exploring the possibility of installing a proton-proton accelerator with a very high collision energy of 10-20 TeV in the same tunnel as LEP. This accelerator would probe the full possible mass range for the Higgs, provided that the luminosity[1] was very high. However, this high luminosity would mean that each interesting collision would be accompanied by tens of background collisions. Given the state of detector technology of the time, this seemed a formidable challenge. CERN wisely launched a strong R&D programme, which enabled fast progress on the detectors. This seeded the early collaborations, which would later become ATLAS, CMS and the other LHC experiments.

On the theory side, the 1990s saw much progress: physicists studied the production of the Higgs boson in proton-proton collisions and all its different decay modes. As each of these decay modes depends strongly on the unknown Higgs boson mass, future detectors would need to measure all possible kinds of particles to cover the wide mass range. Each decay mode was studied using intensive simulations and the important Higgs decay modes were amongst the benchmarks used to design the detector.

Meanwhile, at the Fermi National Accelerator Laboratory (Fermilab) outside of Chicago, Illinois, the Tevatron collider was beginning to have some discovery potential for a Higgs boson with mass around 160 GeV. Tevatron, the scientific predecessor of the LHC, collided protons with antiprotons from 1986 to 2011.

In 2008, after a long and intense period of construction, the LHC and its detectors were ready for the first beams. On 10 September 2008, the first injection of beams into the LHC was a big event at CERN, with the international press and authorities invited. The machine worked beautifully and we had very high hopes. Alas, ten days later, a problem in the superconducting magnets significantly damaged the LHC. A full year was necessary for repairs and to install a better protection system. The incident revealed a weakness in the magnets, which limited the collision energy to 7 TeV.

When restarting, we faced a difficult decision: should we take another year to repair the weaknesses all around the ring, enabling operation at 13 TeV? Or should we immediately start and operate the LHC at 7 TeV, even though a factor of three fewer Higgs bosons would be produced? Detailed simulations showed that there was a chance of discovering the Higgs boson at the reduced energy, in particular in the range where the competition of the Tevatron was the most pressing, so we decided that starting immediately at 7 TeV was worth the chance.

The LHC restarted in 2010 at 7 TeV with a modest luminosity – a luminosity that would increase in 2011. The ATLAS Collaboration had made good use of the forced stop of 2009 to better understand the detector and prepare the analyses. In 2010, Higgs experts from experiments and theory created the LHC Higgs Cross-Section[2] Working Group (LHCHXSWG), which proved invaluable as a forum to accompany the best calculations and to discuss the difficult aspects about Higgs production and decay. These results have since been regularly documented in the “LHCHXSWG Yellow Reports,” famous in the community.

The discovery of the Higgs boson

As Higgs bosons are extremely rare, sophisticated analysis techniques are required to spot the signal events within the large backgrounds from other processes. After signal-like events have been identified, powerful statistical methods are used to quantify how significant the signal is. As statistical fluctuations in the background can also look like signals, stringent statistical requirements are made before a new signal is claimed to have been discovered. The significance is typically quoted as σ, or a number of standard deviations of the normal distribution. In particle physics, a significance of 3σ is referred to as evidence, while 5σ is referred to as an observation, corresponding to the probability of a statistical fluctuation from the background of less than 1 in a million.

Eager physicists analysed the data as soon as it arrived. In the summer of 2011, there was a small excess in the Higgs decay to two W bosons for a mass around 140 GeV. Things got more interesting as an excess at a similar mass was also seen in the diphoton channel. However, as the dataset increased, the size of this excess first increased and then decreased.

By the end of 2011, ATLAS had collected and analysed 5 fb-1 of data at a centre-of-mass energy of 7 TeV. After combining all the channels, it was found that the Standard Model Higgs boson could be excluded for all masses except for a small window around 125 GeV, where an excess with a significance of around 3σ was observed, largely driven by the diphoton and four lepton decay channels. The results were shown at a special seminar at CERN on 13 December 2011. Although neither experiment had strong enough results to claim observation, what was particularly telling was the fact that both ATLAS and CMS had excesses at the same mass.

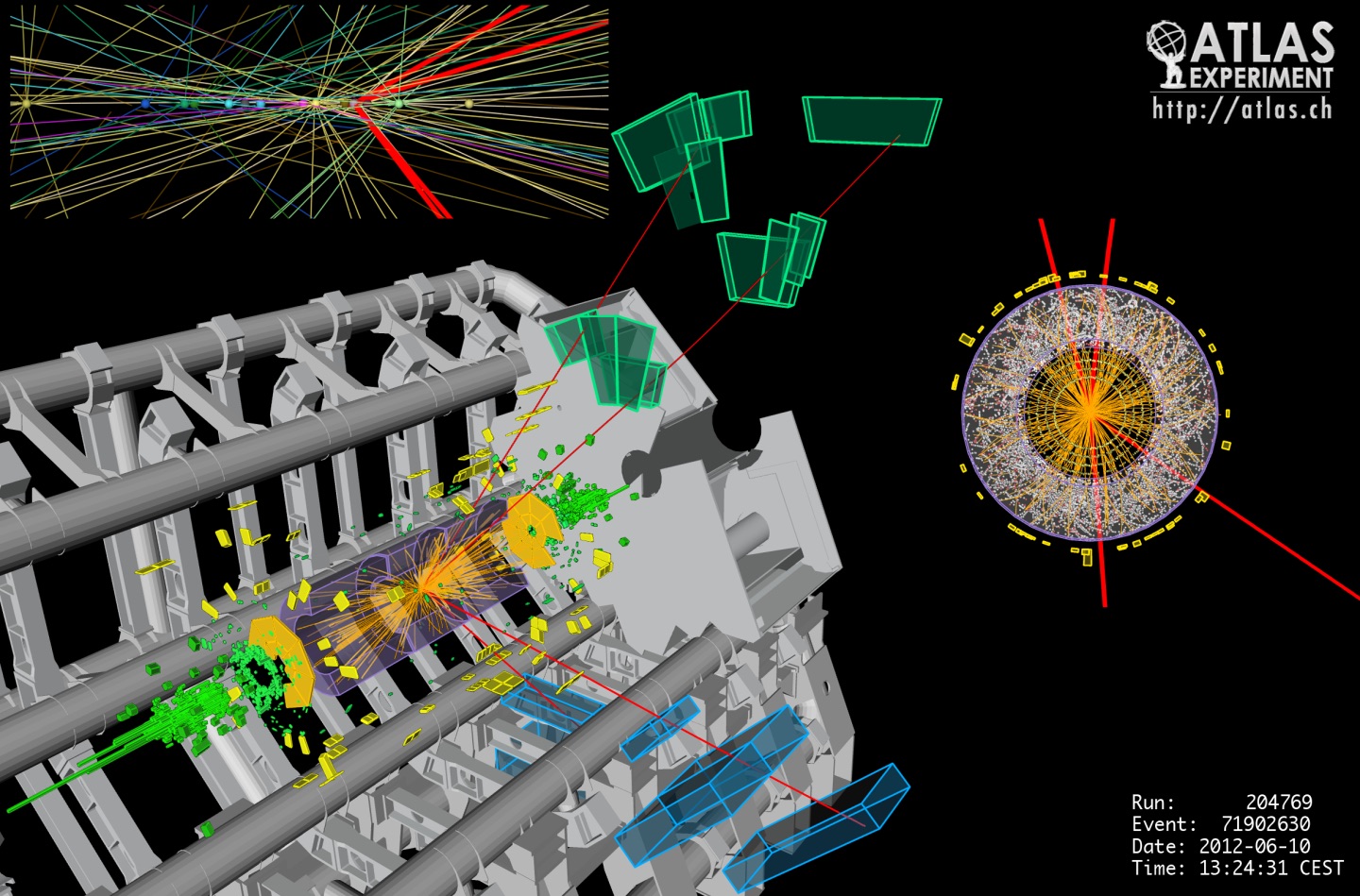

In 2012, the energy of the LHC was increased from 7 to 8 TeV, which increased the cross-sections for Higgs boson production. The data arrived quickly: by the summer of 2012, ATLAS had collected 5 fb-1 at 8 TeV, doubling the dataset. As quickly as the data arrived it was analysed and, sure enough, the significance of that small bump around 125 GeV increased further. Rumours were flying around CERN when a joint seminar between ATLAS and CMS was announced for 4 July 2012. Seats at the seminar were so highly sought after that only the people who queued all night were able to get into the room. The presence of François Englert and Peter Higgs at the seminar increased the excitement even further.

At the famous seminar, the spokespeople of the ATLAS and CMS Collaborations showed their results consecutively, each finding an excess around 5σ at a mass of 125 GeV. To conclude the session, CERN Director-General Rolf Heuer declared, “I think we have it.”

The ATLAS Collaboration celebrated the discovery with champagne and by giving each member of the collaboration a t-shirt with the famous plots. Incidentally, only once they were printed was it discovered that there was a typo in the plot. No matter, these t-shirts would go on to become collector’s items.

ATLAS and CMS published the results in Physics Letters B a few weeks later. The ATLAS paper titled “Observation of a New Particle in the Search for the Standard Model Higgs Boson with the ATLAS Detector at the LHC.” The Nobel Prize in Physics was awarded to Peter Higgs and François Englert in 2013.

What we have learned since discovery

After discovery, we began to study the properties of the newly-discovered particle to understand if it was the Standard Model Higgs boson or something else. In fact, we initially called it a Higgs-like boson as we did not want to claim it was the Higgs boson until we were certain. The mass, the final unknown parameter in the Standard Model, was one of the first parameters measured and found to be approximately 125 GeV (roughly 130 times larger than the mass of the proton). It turned out that we were very lucky – with this mass, the largest number of decay modes are possible.

In the Standard Model, the Higgs boson is unique: it has zero spin, no electric charge and no strong force interaction. The spin and parity were measured through angular correlations between the particles it decayed to. Sure enough, these properties were found to be as predicted. At this point, we began to call it “the Higgs boson.” Of course, it still remains to be seen if it is the only Higgs boson or one of many, such as those predicted by supersymmetry.

The discovery of the Higgs boson relied on measurements of its decay to vector bosons. In the Standard Model, different couplings determine its interactions to fermions and bosons, so new physics might impact them differently. Therefore, it is important to measure both. The first direct probe of fermionic couplings was to tau particles, which was observed in the combination of ATLAS and CMS results performed at the end of Run 1. During Run 2, the increase in the centre-of-mass energy to 13 TeV and the larger dataset allowed further channels to be probed. Over the past year, the evidence has been obtained for the Higgs decay to bottom quarks and the production of the Higgs boson together with top quarks has been observed.[3] This means that the interaction of the Higgs boson to fermions has been clearly established.

Perhaps one of the neatest ways to summarise what we currently know about the interaction of the Higgs boson with other Standard Model particles is to compare the interaction strength to the mass of each particle, as shown in Figure 4. This clearly shows that the interaction strength depends on the particle mass: the heavier the particle, the stronger its interaction with the Higgs field. This is one of the main predictions of the BEH mechanism in the Standard Model.

We don’t only do tests to verify that the properties of the Higgs boson agree with those predicted by the Standard Model – we specifically look for properties that would provide evidence for new physics. For example, constraining the rate that the Higgs boson decays to invisible or unobserved particles provides stringent limits on the existence of new particles with masses below that of the Higgs boson. We also look for decays to combinations of particles forbidden in the Standard Model. So far, none of these searches have found anything unexpected, but that doesn’t mean that we’re going to stop looking anytime soon!

Analysis of the large Run-2 dataset will not only be an opportunity to reach a new level of precision in previous measurements, but also to investigate new methods to probe Standard Model predictions and to test for the presence of new physics.

Outlook

2018 is the last year that ATLAS will take data as part of the LHC’s Run 2. During this run, 13 TeV proton-proton collisions have been producing approximately 30 times more Higgs bosons than those used in the 2012 Higgs boson discovery. As a result, more and more results have been obtained to study the Higgs boson in greater detail.

Over the next few years, analysis of the large Run-2 dataset will not only be an opportunity to reach a new level of precision in previous measurements, but also to investigate new methods to probe Standard Model predictions and to test for the presence of new physics in as model-independent a way as possible. This new level of precision will rely on obtaining a deeper level of understanding of the performance of the detector, as well as the simulations and algorithms used to identify particles passing through it. It also poses new challenges for theorists to keep up with the improving experimental precision.

In the longer term, another big step in performance will be brought by the High-Luminosity LHC (HL-LHC), planned to begin operation in 2024. The HL-LHC will increase the number of collisions by another factor of 10. Among other measurements, this will open the possibility to investigate a very peculiar property of the Higgs boson: that it couples to itself. Events produced via this coupling feature two Higgs bosons in the final state, but they are exceedingly rare. Thus, they can only be studied within a very large number of collisions and using sophisticated analysis techniques. To match the increased performance of the LHC, the ATLAS and CMS detectors will undergo comprehensive upgrades during the years before HL-LHC.

Looking more generally, the discovery of the Higgs boson with a mass of 125 GeV sets a new foundation for particle physics to build on. Many questions remain in the field, most of which have some relation to the Higgs sector. For example:

- A popular theory beyond the Standard Model is “supersymmetry”, which presents attractive features for solving current issues, such as the nature of dark matter. The minimal version of supersymmetry predicts that the Higgs boson mass should be less than 120-130 GeV, depending on some other parameters. Is it a coincidence that the observed value sits exactly at this critical value, hence still marginally allowing for this supersymmetric model?

- Several models have been recently proposed where the only link of Dark Matter with regular matter would be through the Higgs boson.

- Stability of the universe: the value of 125 GeV is almost at the critical boundary between a stable universe and a meta-stable universe. A meta-stable system possesses another baseline state, into which it can decay anytime due to quantum tunnelling.[4] Is this also a coincidence?

- The phase transition: the details of this transition may play a role in the process which led our universe to be entirely matter and not contain any anti-matter. Present calculations with the Standard Model Higgs boson alone are inconsistent with the observed matter-antimatter asymmetry. Is this a call for new physics or only incomplete calculations?

- Are fermion masses all related to the Higgs boson field? If yes, why is there such a huge hierarchy between the fermion masses spanning from fractions of electron-volts for the mysterious neutrinos up to the very heavy top quark, with a mass on the order of hundreds of billions of electron-volts?

From what we’ve learned about it so far, the Higgs boson seems to play a very special role in nature… Can it show us the way to answer further questions?

About the authors

Heather Gray is an experimental physicist at the Lawrence Berkeley National Lab, USA. She is a member of the ATLAS experiment at CERN’s Large Hadron Collider to which she has contributed in many ways, including the measurement of Higgs boson interactions with quarks. Bruno Mansoulié is scientist at CEA-IRFU, Saclay, France. He has worked both as a theoretical and experimental physicist, and is a founding member of ATLAS where he performed, among others, combined Higgs boson analyses and led the Higgs working group. Both enjoy communicating particle physics to non-specialists.

[1] The luminosity is the machine parameter which drives the number of events per second for a given physical process. The higher the luminosity, the more events per second

[2] The cross-section is a measure of the probability for that process to occur during any proton-proton collision. Processes with larger cross-sections occur more often than processes with small cross-sections.

[3] Since the publication of this feature, ATLAS has observed the Higgs boson decaying into a pair of bottom (b) quarks with a significance of 5.4 standard deviations. Read the press statement on this result here.

[4] Fortunately, even if we are a little on the meta-stable side, the lifetime for decay is extremely long compared to the present age of the universe…

Further reading

- Broken symmetry: Searches for supersymmetry at the LHC by George Redlinger and Paul de Jong, ATLAS Feature Article

- ATLAS Collaboration, Observation of an excess of events in the search for the Standard Model Higgs boson in the gamma-gamma channel with the ATLAS detector, July 2012

- ATLAS Collaboration, Observation of an excess of events in the search for the Standard Model Higgs boson in the H-> ZZ(*)-> 4l channel with the ATLAS detector, July 2012

- ATLAS Collaboration, Observation of a New Particle in the Search for the Standard Model Higgs Boson with the ATLAS Detector at the LHC, Phys. Lett. B 716 (2012) 1-29

- ATLAS Collaboration, A Particle Consistent with the Higgs Boson Observed with the ATLAS Detector at the Large Hadron Collider, Science Vol. 338, no. 6114 p1576-158

- ATLAS Collaboration, Measurements of the Higgs boson production and decay rates and constraints on its couplings from a combined ATLAS and CMS analysis of the LHC proton-proton collision data at 7 and 8 TeV, JHEP 08 (2016) 045