Ensuring high-quality data at ATLAS

13 November 2019 | By

Over the course of Run 2 of the LHC – from 2015 to 2018 – ATLAS recorded an unprecedented 147 inverse femtobarn (fb–1) of proton–proton collision data at a centre-of-mass energy of 13 TeV. Before these data could be distributed to computing centres worldwide, every byte was carefully scrutinised to ensure its integrity. During Run 2, ATLAS achieved an exceptionally high data-quality efficiency for a hadron collider, with over 95% of the 13 TeV proton–proton collision data certified for physics analysis. In a new paper released today, the ATLAS data quality team summarises how this excellent result was achieved.

Throughout Run 2, teams of “online shifters” were on hand 24 hours a day in the ATLAS Control Room. Their task was to monitor, in real time, the detector components and algorithms used to reconstruct and select interesting collision data. The status of these components and other relevant time-dependent information – called “detector conditions” – is documented in dedicated databases. Should a technical problem arise during data-taking, the shifters would quickly identify and correct the issue, while also taking note of which data were collected while the issue was present. In this way, physicists could later filter out data collected during anomalous operating conditions, where the quality of the data could be compromised.

During Run 2, ATLAS achieved an exceptionally high data-quality efficiency for a hadron collider, with over 95% of the 13 TeV proton–proton collision data certified for physics analysis.

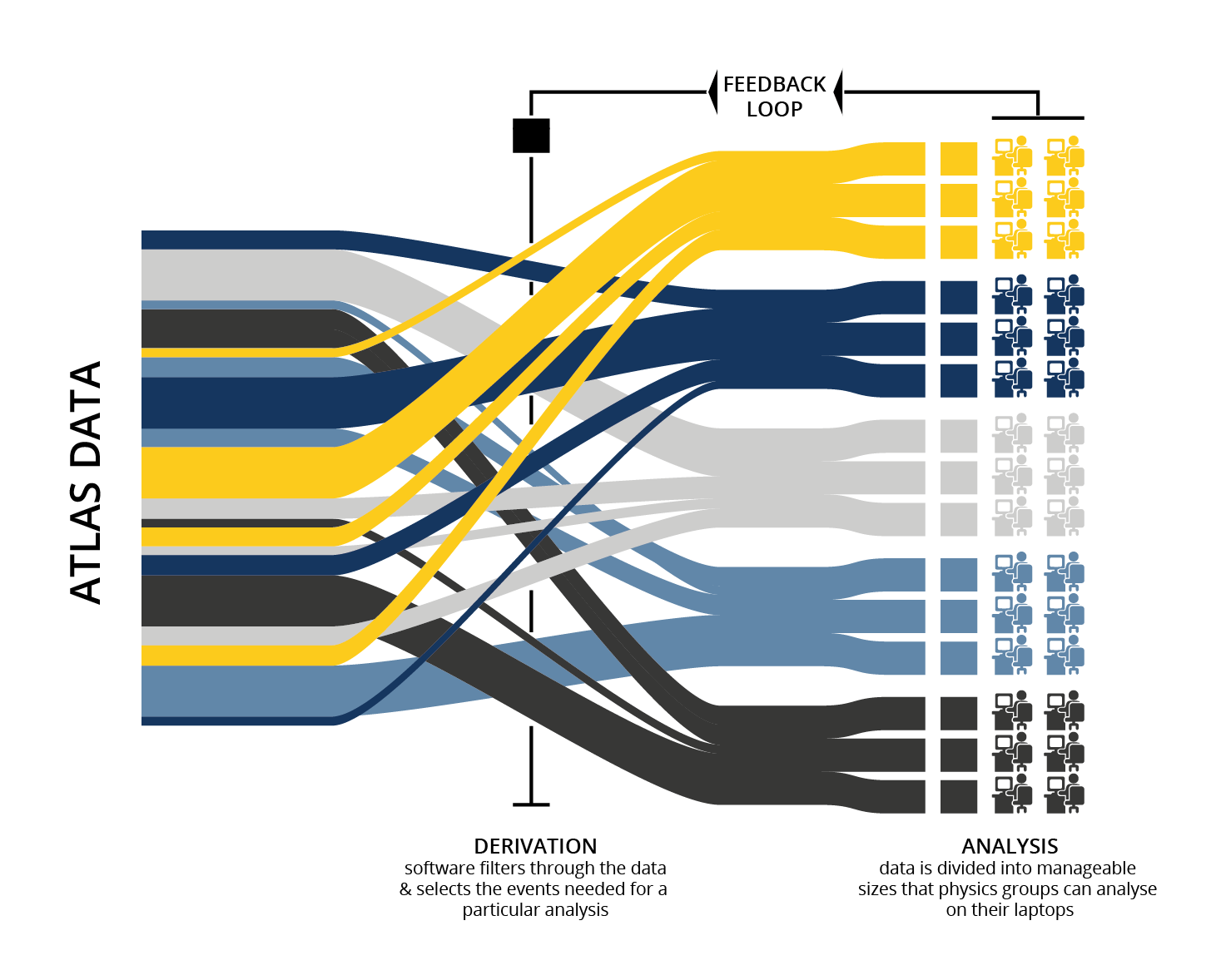

Once recorded by ATLAS, data would go through at least two stages of internal review by data quality experts. These experts were tasked to assess reconstructed datasets as they become available, ensuring that data of degraded quality are appropriately marked and eventually certifying the dataset can be safely sent off for physics analysis. This workflow is shown in Figure 1.

First, a fraction of the recorded data are reconstructed, even while the LHC is still delivering collisions. This small “express” data stream is assessed by data quality experts, who run custom algorithms on the data to identify any symptoms that could indicate a quality issue. Some issues can be corrected via software or conditions updates during the data reconstruction process. After identifying and correcting possible issues, ATLAS experts update the relevant information in the conditions database.

Once this first review is complete, the reconstruction of the entire dataset collected during an uninterrupted period of LHC collisions (a “fill”) is launched. Following this “bulk” data processing, data quality experts would assess the quality of the whole available dataset, using the same dedicated tools described above. Any remaining data affected by data quality issues would finally be flagged for rejection from physics analysis.

Only once this validation process is complete, experts create a “Good Runs List” (GRL) excluding all data affected by data quality problems. A GRL is essentially a menu of good physics data for ATLAS physicists to use for their analyses.

The integrated luminosity of a GRL tells us how much collision data were certified for physics use over a given period of time. By comparing the integrated luminosity of a GRL to the integrated luminosity recorded by ATLAS for a given dataset, one can determine a data quality efficiency for that dataset. In other words, what percentage of the data recorded is of high enough quality for physics analysis. The resulting good-for-physics proton–proton collision dataset for Run 2 amounted to 139 fb–1.

While there are numerous low-level issues that can have a small impact on data quality, the largest contributions tend to be a result of one-off problems. This is reflected in Figure 2, where the cumulative data quality efficiency is shown per year between 2015 and 2018. For example, in 2017 a combination of operational problems and hardware issues led to the ATLAS toroid magnet system being switched off for a short period. This resulted in a sharp drop in the data quality efficiency from 98% to 94%.

However, the ATLAS detector operation teams learned from each of these one-off events and constantly improved their procedures. As a result, ATLAS data quality efficiency during Run 2 increased each year. Over the full course of Run 2, ATLAS recorded proton–proton collision data with an efficiency of 95.6%. The lessons learned and experience gained during Run 2 will be built upon in the future Run 3 to help ATLAS continue to operate with the highest achievable data quality efficiency.

Learn more

- ATLAS data quality operations and performance for 2015-2018 data-taking (arXiv: 1911.04632, see figures).

- See also the full lists of ATLAS Conference Notes and ATLAS Physics Papers.