Evolving ATLAS conditions data architecture for LHC Runs 3 and 4

22 June 2023 | By

What do ATLAS physicists mean when they talk about “data”? The first answer that often comes to mind is “physics data” – that is, the signals collected from high-energy collisions inside the ATLAS experiment. But in order to study these collisions, they need much more than that. Researchers rely on a wide range of auxiliary information called “conditions data”, which describes every aspect of the experiment during collisions. From sub-detector and accelerator calibrations to data-acquisition parameters, these data are essential for physicists to fully reconstruct and study LHC collisions.

For Run 3 of the LHC (2022–ongoing), the ATLAS Collaboration decided to change how it stores and processes conditions data. The significant efforts that went into this change – and the motivations for them – were presented at the 26th International Conference on Computing in High Energy and Nuclear Physics (CHEP) in May.

ATLAS data are stored, copied and distributed to more than 150 computing sites via the Worldwide LHC Computing Grid (WLCG). Throughout Run 1 and Run 2 of the LHC (2010-2018), the ATLAS Collaboration stored its conditions data in relational tables in an Oracle database, using infrastructure and software provided mainly by the CERN Information Technology (IT) department. Access to the conditions database was done using an intermediate system called the Frontier-Squid system. Physicists used Athena (the ATLAS event processing software framework) to manage their database access and queries, which would be sent as HTTP requests.

While this set-up worked well, researchers observed some weak points. First, service would degrade when running special workflows due to low caching efficiency in the Frontier-Squid system. Second, conditions data management proved cumbersome because the data were stored in over 10,000 underlying tables. Third, the conditions database software required a specific version of Oracle software to work. Fourth, the software underlying Athena was no longer going to be supported by the CERN IT Department. Further, the data were being stored in two different Oracle databases (Online and Offline). Replicating these data required two different technologies, which was a concern for long-term support.

The ATLAS Collaboration has changed how it stores and processes "conditions data" – essential information describing every aspect of the experiment during collisions.

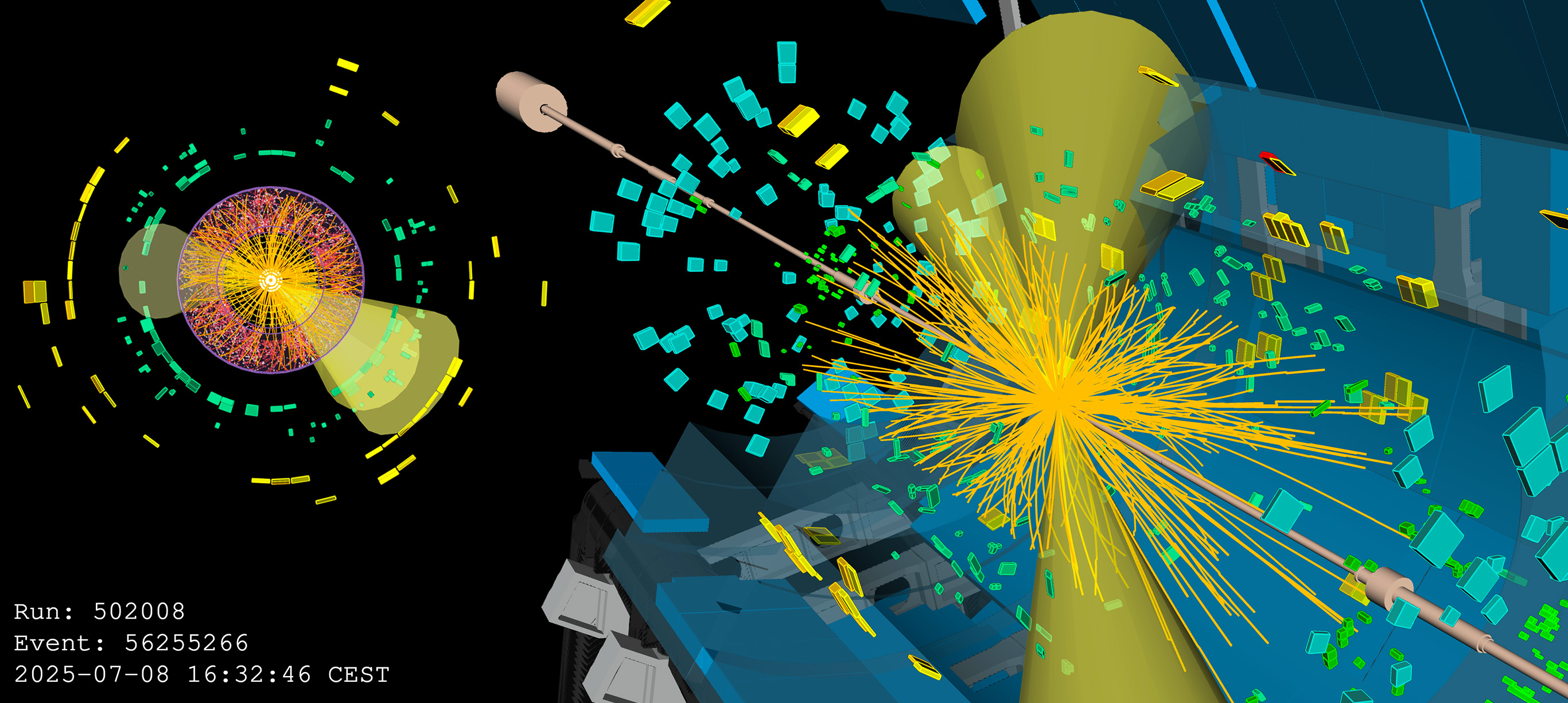

All these considerations led researchers to reevaluate and reorganise the Oracle/Frontier architecture and the conditions data model for Run 3 of the LHC. The results of this big effort can be seen in Figure 1, highlighting the changes between Run 2 and Run 3. The conditions data consolidation was successfully done in the Online Oracle cluster. The data replication technology was reduced from two to one, thus simplifying maintenance of the required hardware resources and sustaining the operational personpower. The Frontier server deployment was also simplified by using only the Oracle database at CERN. The Run 3 infrastructure provides a new environment dedicated to “data processing” workflows, while an Offline cluster continues to host many other databases containing information about ATLAS publications or detector construction.

For Run 4 of the LHC, expected to begin in 2029, a new data model called CREST (Conditions REST) is under development. Based on a collaboration with the CMS experiment, this model disentangles the clients from the storage solution. A comparison of Run 3 and Run 4 architectures can be seen in Figure 2. CREST provides optimal data caching by design; the data are retrieved from the Oracle database using a unique key, thus CREST always uses the same query for the same data, unlike the current model. CREST also allows researchers to easily place data in a filesystem, which is relevant for data preservation. CREST development is progressing well, with data migration tools already undergoing testing. CREST will be fully deployed by the end of Run 3 to be ready in time for Run 4.

Learn more

- Towards a new conditions data infrastructure (CHEP 2023 proceedings paper, submitted to EPJ Web of Conferences)

- An information Aggregation and Analytics System for ATLAS Frontier (EPJ Web of Conferences 245, 04032 (2020))

- Conditions evolution of an experiment in mid-life, without the crisis (in ATLAS) (EPJ Web of Conferences 214, 04052 (2019))