Frantic for femtobarns...

19 August 2011 | By

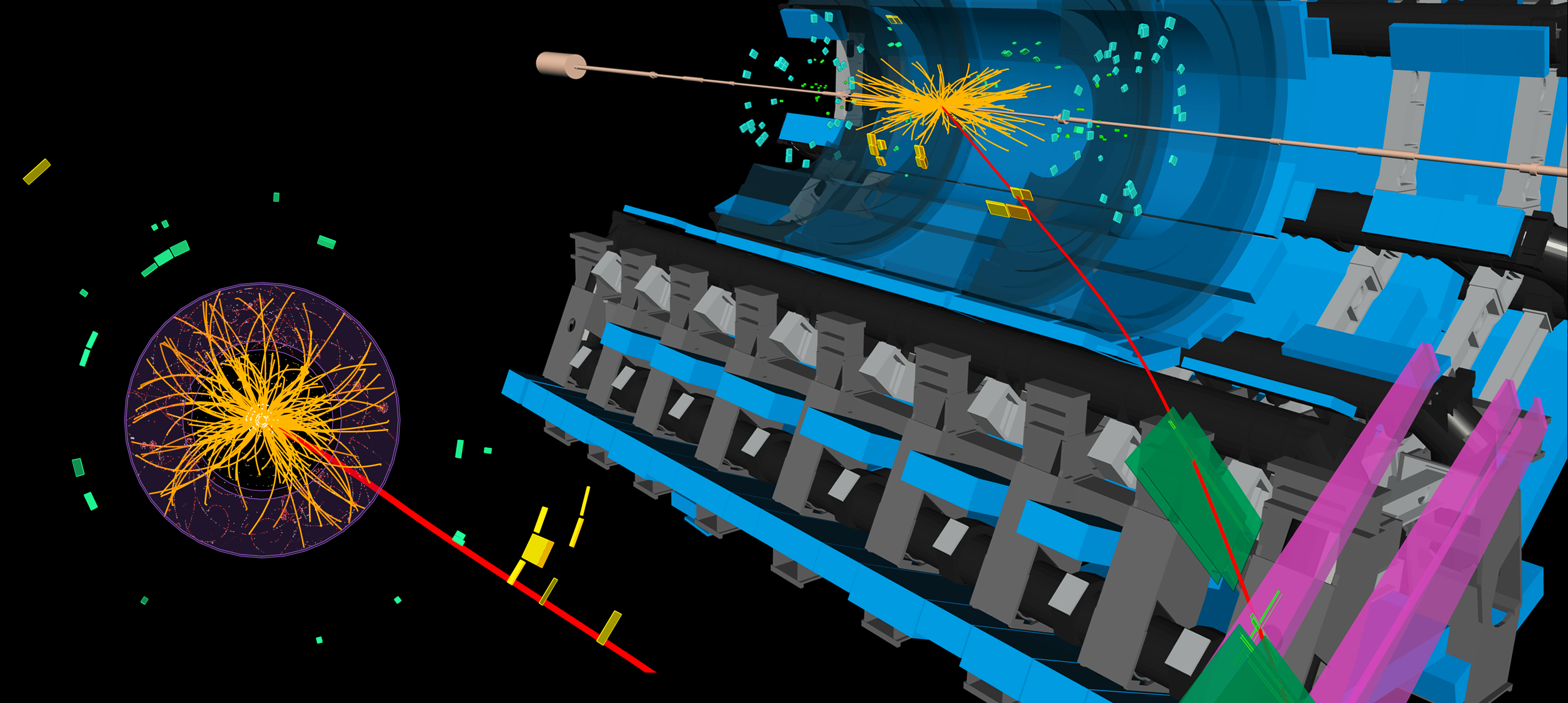

In particle physics, we describe the number of interesting particle collisions that we have in our data in terms of the "integrated luminosity", which is measured in units called inverse femtobarns. In the whole of 2010, the LHC delivered about 0.04 inverse femtobarns (about 3 million million collisions). Nowadays, it can deliver twice that in a single day! By the time of the EPS conference at the end of July we had collected one inverse femtobarn of data, but the hard-working ATLAS data analysts will have had more than twice that amount to look at by the start of the Lepton Photon conference next week. This is a remarkable achievement by the LHC people who fire the protons our way, but it also means that the pressure is really on us at ATLAS to make sure we record everything we can – there's no point in all these Higgs bosons (or whatever new particles might be out there) being produced by the LHC if we aren't capturing the data. This is where the ATLAS operations people come in.

Like the LHC (and like our friendly rival experiment CMS), ATLAS runs 24/7. The ATLAS control room is populated by shifters, who work there for eight hours at a time. There’s a shifter for each detector system within ATLAS, and overseeing everything is a "shift leader". If something goes wrong, and the shift crew don't know how to fix it, they can call upon one of a large pool of on-call experts, ready to come to the control room at a moment's notice, day or night, to fix the problem. Finally, in charge of everything are the ATLAS run coordinators.

Some weeks, I am an on-call expert for a part of the tracking system. Other weeks, I am a "run manager", whose role fits somewhere between the shift leader and the run coordinators. This can be a pretty tough job! Even when everything in ATLAS is running relatively smoothly there are always little things that go wrong, and plans for fixes and improvements that need to be coordinated with the times that the LHC isn't delivering collisions. But when something does go wrong with ATLAS, the job gets a whole lot tougher…

Since the start of 2010, ATLAS has recorded over 95% of the integrated luminosity that the LHC has thrown at us. That's pretty good, by any standards. But not good enough – we want to record it all! In the heat of the moment – when the LHC is producing interesting collisions at an unprecedented rate, our rivals nine kilometres away are busily recording them, and there's all that new physics out there waiting to be discovered – one hour where we're not taking data can seem like a catastrophe.

And yet with a detector as complex as ATLAS, it's all too easy to lose an hour or more. Perhaps it takes some time to figure out what the problem is and which expert needs to be called. Perhaps it's the middle of the night and the expert needs a bit of time to get up, get dressed, and get to CERN. Perhaps a machine needs to be rebooted, which can take some minutes, and might require the current ATLAS data-taking "run" to be stopped and restarted (which itself can take up to half an hour).

Recently I was in the control room, helping out the shift leader, as no less than five independent problems occurred, one after the other, just as ATLAS was starting to give us useful collisions for that afternoon – exactly the kind of data we want to take to Lepton Photon. Sorting it out swiftly involved lots of frantic rushing between different shifter desks and phone calls to experts. All these little bits of time add up, and all are accounted for in gruesome detail at the next morning's operations meeting. However, the idea here is not to apportion blame, but to discuss solutions and devise plans for what should be done if the problem comes up again.

A good example occurred two weeks ago, when a particular problem came up a few times: a type of computer lost communication with the network and prevented ATLAS from recording data. In order to fix this, the ATLAS run had to be paused, the machine rebooted, and the run resumed. However, the symptoms of the problem were not obvious, and it happened to consecutive shift crews. It took several phone calls and expert investigations before the problem was finally fixed. Each time, more than an hour was lost. After discussion with the run coordinators, the relevant expert wrote some precise instructions for what to look for, and a script that could be executed by the shift leader. That same night, the problem happened twice, the shift leader took the right action, and only about 10 minutes worth of data were lost. Or, to look at it another way, about 100 minutes worth of data were saved. And that's a few more fractions of an inverse femtobarn where the Higgs could be hiding!